Current evidence does not clearly support cardiovascular guidelines that encourage high consumption of polyunsaturated fatty acids and low consumption of total saturated fats.

So says the team led by Rajiv Chowdhury in their “Association of Dietary, Circulating, and Supplement Fatty Acids With Coronary Risk: A Systematic Review and Meta-analysis” in the journal Annals of Internal Medicine.

If true, this is mighty bad news for those politicians, bureaucrats, and other busybodies who have made careers nagging citizens to avoid cream, cheese, butter, ghee, suet, tallow, lard, and, of course, red meats (Wikipedia has a list of tasty fats). Examples of such folk includes the government’s newly formed Dietary Guidelines Advisory Committee. Reason magazine noted that “A look through the transcript of last week’s hearing reveals the word ‘policy’ (or ‘policies’) appears 42 times. The word ‘tax’ appears three times.”

There is nothing the government likes better than telling you what to do1—it’s for your own good. But they do like to sound sciency about their dictates, which is why papers like Chowdhury’s will be disquieting. The paper eats the wind out of the sails of the low-fat and “good”-fat touts. And a sober reminder how delicate and changeable evidence of diet and health really is.

Before we discuss the results, if you don’t already know why you should (roughly) double every confidence interval you see, please first read the notes below.

Chowdhury’s paper is a meta-analysis, which is a way to group studies of a similar nature and say something about them in toto. There are two kinds of meta-analysis. The first groups studies the majority of which individually did not show “statistical significance”, i.e. showed no effect, but which when grouped (somehow) show the hoped-for effect. Because of the misinterpretation of things like confidence intervals, these kinds of meta-analyses should rarely be trusted.

The second kind of meta-analysis, and the kind which Chowdhury did, is to group studies the majority of which did not show significance but when grouped…also show insignificance. Because standard statistical evidence is designed to give positive results so easily, these kinds of meta-analyses can almost always be trusted.

Of course, no meta-analysis is ever perfect: there are too many ways of going wrong; but this one seems fairly solid.

Our authors examined studies which paired cardiac outcomes and various kinds of fats. For example, the group of fatty acid supplementation observational studies gave a joint relative risk for coronary disease from 0.98 to 1.07 (this is the 95% confidence interval; which if it contains 1 is “not significant”). For use in real predictions, to first approximation, double this to get 0.93 to 1.12. In other words, fatty acid supplementation does squat for avoiding heart disease.

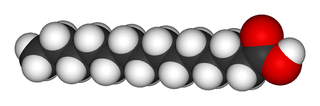

Similar results were had for saturated fats (0.91 to 1.10), monounsaturated fats (0.78 to 0.97), long-chain ω-3 polyunsaturated fats (0.90 to 1.06), and even, glory be, trans fatty acids (1.06 to 1.27; but doubled is 0.96 to 1.38). The paper lists several more, but the results are similar to these. (See at the bottom of this page some minor numerical corrections admitted by Chowdhury, none of which change the conclusions.)

To repeat the juiciest findings (emphasis mine):

Our findings do not support cardiovascular guidelines that promote high consumption of long-chain ω-3 and ω-6 and polyunsaturated fatty acids and suggest reduced consumption of total saturated fatty acids.

They also say, “Nutritional guidelines on fatty acids and cardiovascular guidelines may require reappraisal to reflect the current evidence.”

But will they be reappraised? Doubtful. It would be too much like admitting a mistake.

————————————————————————————-

1From Reason: “The Washington Free Beacon’s Elizabeth Harrington reported last week that NIH had spent nearly $3,000,000 in recent years to fund studies looking into the possibility of using text messages and web tools to treat obesity.”

Notes:

On confidence intervals: (1) They don’t mean what frequentists say they mean, but always in practice take the definition of Bayesian credible intervals. A credible interval speaks of the guess of a probability model parameter, “There is a 95% chance the true value of the parameter lies in this interval, given all the data we have and assuming the model is true.”

(2) The Bayesian credible interval does not mean what it says. Instead, everybody always takes the interval to speak of reality (about real risk, say) and not a model parameter. Because of this, as a rough rule of thumb, always multiply the stated interval by about (at least) 2. See this or this article for insight.

Thanks to @Mangan150 where I first learned of this study.

Update About the paper’s data corrections.

Discover more from William M. Briggs

Subscribe to get the latest posts sent to your email.

Doctors claim that our liking for fatty or sugary foods explains why so many pe0ple die from heart attacks. This is the medical orthodoxy.

However, until the 1960s, the exact opposite was believed with equal certainty. In the 1930s the prevailing view of a healthy diet was one rich in fat, low in fiber. More animal protein was held to be the answer to all of our nutritional problems. Bread and other high carbohydrate foods were called empty calories. There are fads in medicine which are promoted as if they came down the mountain on stone tablets.

$3,000,000 in recent years to fund studies looking into the possibility of using text messages and web tools to treat obesity.

I hate seeing stuff like this. The number of years isn’t mentioned but if it were say, three, then they spent roughly $1M/yr to task less than 5 people to look into this. An FTE is roughly $0.5M/yr when employing senior consultants so the 5 people is an upper limit.

If it’s a given that obesity is a problem, I would think it reasonable to investigate. Bet they spent many times this establishing obesity as a problem. Why pick at nits?

If you think the FTE value is out of line, recently I’ve heard that street performers in Boston can make $500-700 per day. My ex used to bring home between $300-500 per night working as a waitress and this was back in the 80’s.

DAV,

Yeah, but that’s “sexy” sounding science. How could they not fund studies like that? How can you know it won’t work before you study it?

I’m pretty sure that the Paleo diet isn’t going to be included on Obama’s Climate Change dietary guidelines…

Jim S,

Actually, If the eco-nuts get their way, the Paleo diet is going to be very important as we will have been reduced back to hunter/gatherers.

Briggs.

It was a pretty small effort and not an unreasonable thing for them to do.

What I was griping about are the gratuitous visceral punches that are becoming popular as the media yellows with age. A typical example: “Tonight there was an airliner crash in Timbuktu and 353 people died including an infant and a PUPPY! as if the really important aspect were the deaths of the infant and puppy.

The NIH project might have been a total waste of money but mentioning it is on the level of using a loss of three drops of water while discarding the contents of a bucket as the example of waste. Really? The two pint spill on the way to the discard site or the tossing out of the rest weren’t better examples?

What about the total amount of money wasted pursuing and propping the latest pet theory using questionable science? Building websites and phone apps was the best that could be found?

Bleh!

Dav,

“It was a pretty small effort and not an unreasonable thing for them to do.”

It would have been unreasonable even if they had only spent one dollar on it. The problem is not the total spend. It’s the spend relative to the likely impact that is the problem. Even at a total spend of only $1 they will have outspent the value of any impact it could possibly have.

Do you have any idea how much money gets spent in this country on weight loss schemes? And yet obesity gets worse and worse. The idea that this project could have any impact > nil is absurd.

MattS,

“And yet obesity gets worse and worse.” What do you base this statement on?

The following link is of interest.

http://junkfoodscience.blogspot.ca/2008/05/war-on-childhood-obesity-is-showing-its.html

I’m going to trust the Ivy League educated people got it right this time.

https://www.princeton.edu/main/news/archive/S26/91/22K07/

It’s not the fat consumption and never was. Too bad Dr. Atkins had a heart attack and so many dismissed his message – post hoc ergo propter hoc.

I believe Dr Atkins died from complications following a fall.